I don’t know why but whenever something from Center for Open Science pops up in the news feeds, my fingers get twitchy and I start to imagine writing some response (see also). It may be because @BrianNosek and I used to play volleyball together and he was a terrific athlete whose awesome v-ball spikes I am still imagining trying to block (true story).

COS recently posted an article with the title “One Preregistration to Rule Them All?” discussing various mechanics of pre-registering of one’s study when there are multiple sub-studies and inter-linked collaborative studies. This led my colleague @jplotkin to comment tongue-in-cheek that it would make @BrianNosek Sauron and @OSFramework Mount Doom. While I am sure Josh meant it as a joke, I thought the “Rule Them All” and “Sauron” comments were an apt metaphor for the good intentioned efforts of COS—efforts that I have a hard time buying into.

It is common to think that the main failure of Communism, with its ideal of “From each according to his ability, to each according to his need”, was mostly a failure of human nature; our greed and our laziness unable to live up to those lofty ideals. But, communism has a much more critical obstacle than mere human foibles. Communism, capitalism, socialism, etc. are economic systems, each sharing the main goal of matching supply (from one’s ability) to demand (one’s need). So, any system has to solve the problem of how to allocate appropriate labors and resources to create supplies that meet the demands of every member of the society–a problem involving literally billions of variables. Free markets are basically a heuristic solution to the problem of optimal allocation. If we want to employ a different approach, say rational planning (e.g. communism), then we need to solve Leonid Kantorovich’s (the only Soviet Nobel Laureate in Economics) computational problem of optimal resource allocation. Of course, there are no solutions for optimization over billions of variables.

I previously suggested that the main activity of science is to exchange ideas. Each of us supply ideas and data and we consume the ideas and data created by others. We might think of each journal as kind of a market where we each display our wares in the hope that some might buy them. Like real markets, there is some regulation to prevent fraud, but for the most part, supply and demand are dynamically regulated by the actors themselves. The current journal system has a lot of problems, but as a heuristic solution to the optimal allocation problem of getting the “true” knowledge to those that need it, the system works pretty well—albeit with caveat emptor.

COS and its supporters are focused on the errors and negatives of such a heuristic solution and want to create systems to rationally distribute knowledge. Like early communists, I am sure their goals are motivated by honest wishes for improving society. Yet, in the end, whatever system they construct must not only eliminate the problems of the free market but also provide solutions to the optimal allocation problem. And, solving this problem involves not only the intractable computational problem of optimization but also the more subtle problem of taking into account “unknown but possible worlds” variables. For example, before deciding on what to cook for dinner, I often browse the aisles at my grocery store to see if something is inspiring. The result is quite different from what would have happened if somebody delivered bunch of groceries to my door. Similarly, flawed studies like the famous ‘arsenic-life’ or say the human cloning study actually triggered all kinds of interesting science.

As ominously presaged by the title of their own blog article “Preregistration: A plan, not a prison“, creating a global plan without truly solving the allocation problem is highly likely to fall short of the heuristic free market solution and feel very much the prison to the players put in sub-optimal positions. Of course, when we add to this all the failures of human foibles, it might be good to remember how the well-intended revolutionaries soon moved to the absolutism of the Bolsheviks and the subsequent even more unfortunate autocratic events.

So, do we really want sanitized science delivered in a Harvest Box every month? For me, I really enjoy my occasional Big Mac and I’d rather take my chances with some fake p-values and irreproducible results.

[[Addendum]]

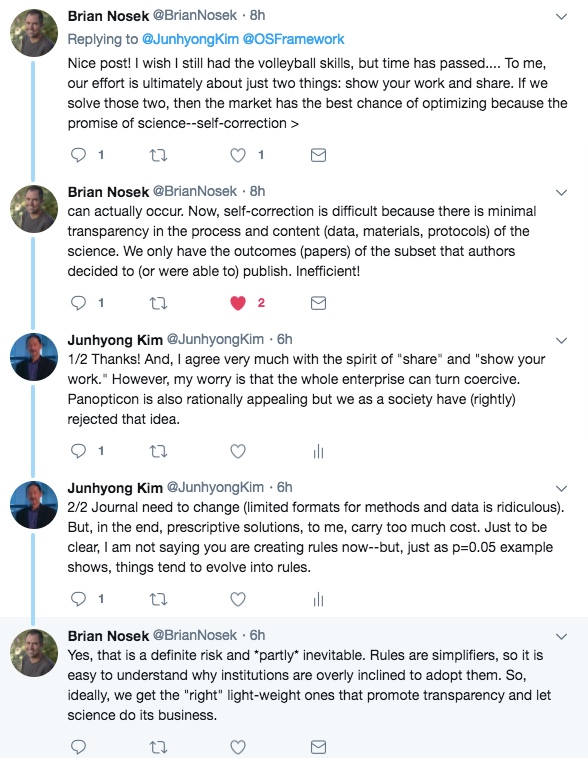

After I posted this, @BrianNosek kindly replied to my tweet and we had the following exchange:

So, I think it comes down to where each of us thinks the “right light-weight” line sits. If some one commits a bad act, clearly the society incurs a cost. We can take various actions to suppress the probability of the bad act, lowering the expected cost. But, every suppressive action has its own cost and we have to consider the sum cost. In general, simple punitive actions (e.g., censuring a researcher when they commit fraud) is less costly to execute compared to controlling everybody’s actions for prevention (think taking off your shoes at the airport). This is Foucault’s “punitive city” versus “coercive institution” dichotomy.

What action to take to minimize total cost depends on the cost of the bad act and the incremental suppression costs vis-a-vis the incremental reduction of the bad act. We could have situations like the left figure below or the right figure. In fact, sometimes the lowest cost might be to do nothing (e.g., children trespassing on grass). And, I admit some actions should be absolutely prevented despite enormous costs of suppression. The difficulty is we don’t know what the cost functions looks like for the issue of reproducibility in science. (Or, for that matter, we don’t seem to investigate cost functions for most rules we adopt or don’t adopt.) However, once put in place, coercive institutions tend to have an insidious tendency for viral spread and economic entrenchment of interests for maintaining the “political technology” of coercion. I think we are all pretty familiar with that.